There are different ways to relate brain measurements to computational models. For example, the 2019 edition of the Algonauts Challenge used representational similarity analysis (RSA, Kriegeskorte et al. 2008).

As in the 2021 edition, we leave it up to you to determine the approach to predict brain responses (see Challenge Rules). As an example, we guide the reader through one common approach called linearizing encoding (Naselaris et al., 2011; Wu et al., 2006), where the response of each vertex is predicted independently using the multiple features provided by a computational model (linear regression is typically used to form the prediction).

We provide an example implementation of the linearizing encoding model using AlexNet as the computational model in the development kit.

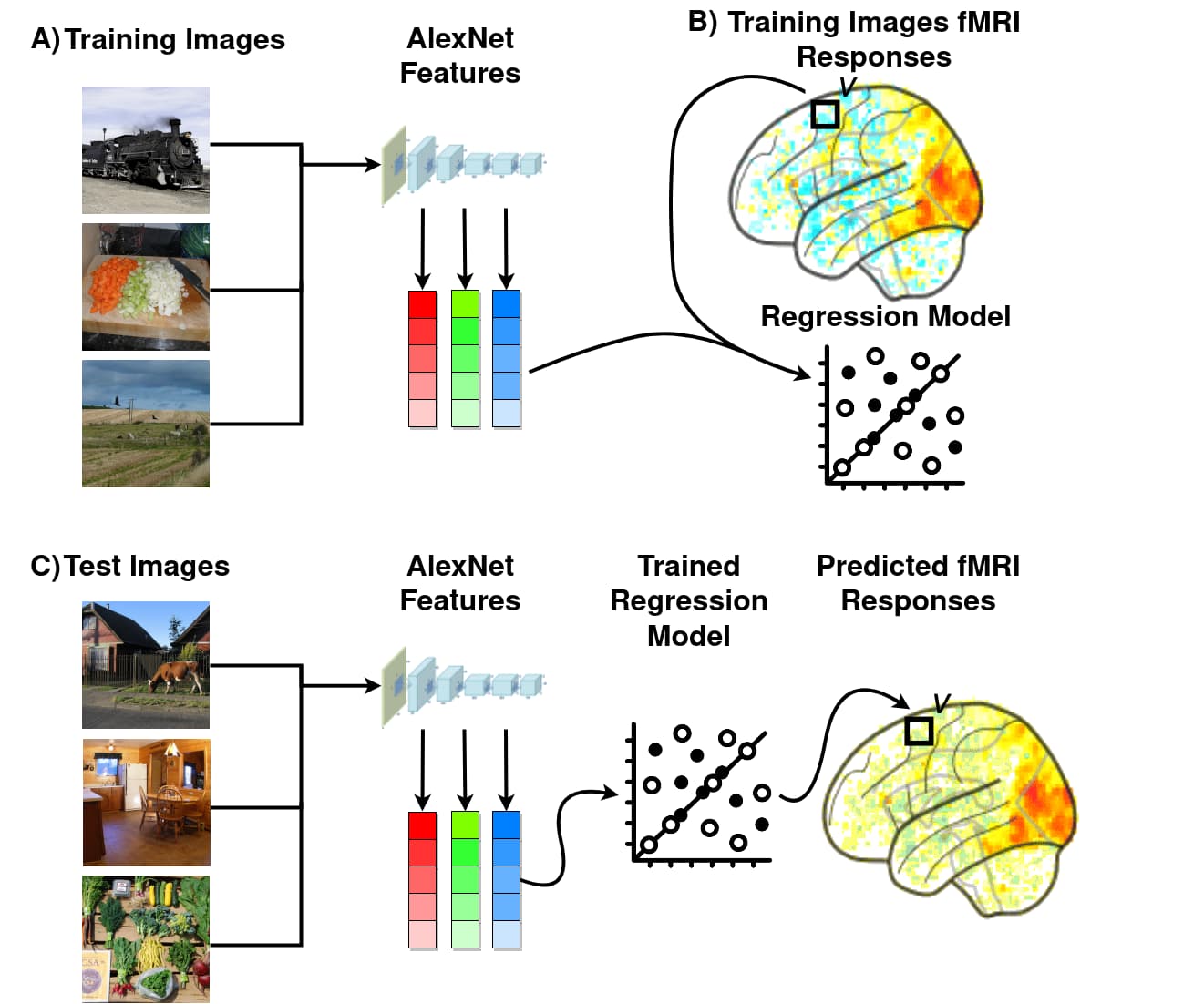

Figure 1. A) First, we use a computational model to extract the visual features of a given set of train images. B) We then estimate a linear mapping (via regression) between these model features and the brain vertex activity responses to the same train images. C) After the linear mapping has been estimated we use it to predict (i.e., to encode) the brain vertex responses to the test images.

The approach has three steps:

Step 1. Features of a computer vision model to images are extracted (Fig. 1A). This changes the format of the data (from pixels to model features) and typically reduces the dimensionality of the data. The features of a given model are interpreted as a potential hypothesis about the features that a given brain area might be using to represent the stimulus.

Step 2. Features of the computational model are linearly mapped onto each vertex's responses (Fig. 1B) using the provided train set. This step is needed as there is not necessarily a one-to-one mapping between vertices and model features. Instead, each vertex's response is hypothesized to correspond to a weighted combination of activations of multiple features of the model.

Testing the model: If the computational model is a suitable model of the brain, the mapped predictions of the encoding model will fit empirically recorded data well. In the Challenge, we test your predicted brain responses against the held-out brain data responses to images from the test set. If you want to evaluate your model yourself before you submit, you can do so by dividing the data we provide you further into a train and a validation set. The development kit provides an example of how to do this.

Step 3. The estimated mapping from the train dataset is applied on the model features corresponding to images in the test set, thus predicting the fMRI responses to the test images (Fig. 1C). The predicted fMRI test data is then compared against the ground-truth (withheld) fMRI test data. This ensures that the model fit is cross-validated and thus unbiased. In the context of the Challenge, we do the comparison for you since we keep the test set brain data hidden.

Kriegeskorte, N., Mur, M., & Bandettini, P. A. (2008). Representational similarity analysis-connecting the branches of systems neuroscience. Frontiers in systems neuroscience, 2, 4. DOI: https://doi.org/10.3389/neuro.06.004.2008

Naselaris, T., Kay, K. N., Nishimoto, S., & Gallant, J. L. (2011). Encoding and decoding in fMRI. Neuroimage, 56(2), 400-410. DOI: https://doi.org/10.1016/j.neuroimage.2010.07.073

Wu, M. C.-K., David, S. V., & Gallant, J. L. (2006). Complete Functional Characterization of Sensory Neurons by System Identification. Annual Review of Neuroscience, 29(1), 477–505. DOI: https://doi.org/10.1146/annurev.neuro.29.051605.113024